In a groundbreaking announcement, OpenAI has set a new standard in the AI world with its latest ChatGPT developments. The company has unveiled a series of significant updates that are poised to redefine what we can expect from artificial intelligence platforms.

GPT-4 Turbo: A New Era of AI Models

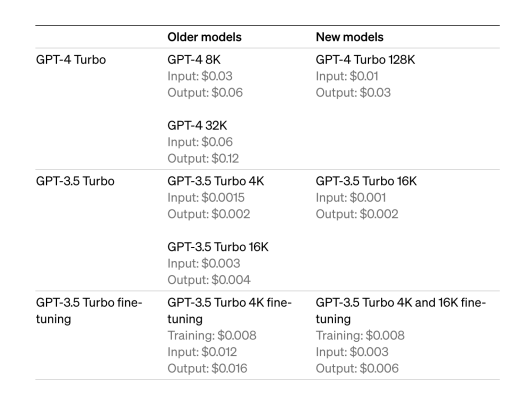

The star of the show is the new GPT-4 Turbo, a model that not only surpasses its predecessors in capability but also in affordability. With a 128K context window, this powerhouse can handle over 300 pages of text at once, understanding and generating responses with unprecedented context and depth. The pricing? A game-changer—3x cheaper for input tokens and 2x cheaper for outputs compared to GPT-4.

Source: OpenAI

Updated Knowledge Cutoff Date

The knowledge cutoff date has been updated, meaning GPT-4 Turbo is now equipped with information up to April 2023, keeping AI interactions relevant and timely.

Function Calling Updates

Function Calling has received a major upgrade. This feature now allows for multiple functions to be executed in a single command, streamlining interactions and enhancing the developer experience.

Improved Instruction Following and JSON Mode

GPT-4 Turbo sets a new benchmark in instruction following and introduces JSON mode for those who need syntactically correct JSON outputs. The ‘seed’ parameter is a game-changer for reproducible outputs, perfect for debugging and creating consistent user experiences. And soon, developers will gain insight into the model’s decision-making with the launch of a feature that reveals the log probabilities of output tokens.

Updated GPT-3.5 Turbo

Not to be overshadowed, GPT-3.5 Turbo has received its own set of upgrades, including a default 16K context window and a significant 38% improvement in tasks like JSON, XML, and YAML generation.

Text to Speech

OpenAI’s new modalities break the text barrier. GPT-4 Turbo now has vision capabilities, while DALL·E 3 enables the integration of image generation into applications. The Text-to-Speech API brings six preset voices and quality options to choose from, making AI interactions more human-like.

Assistants API: Build AI-Powered Apps with Ease

OpenAI introduces the Assistants API, enabling the creation of AI-driven experiences that leverage advanced capabilities like Code Interpreter and Retrieval. This API represents a leap towards creating more dynamic and specialized AI tools.

Build Custom GPTs

For specialized needs, OpenAI presents the opportunity to build custom GPTs through an experimental access program, offering unparalleled customization.

GPT Marketplace

The GPT Marketplace emerges as a hub for developers to access a variety of GPT-powered applications, fostering a community of innovation and collaboration.

Copyright Shield

OpenAI introduces Copyright Shield to defend and cover costs for customers facing copyright infringement claims, a move that reflects their commitment to user protection.

Whisper 3

Whisper 3 promises improved performance in automatic speech recognition across languages. It uses the open source automatic speech recognition model (ASR). It was also announced that the support for Whisper V3 through API is coming later this year showcasing OpenAI’s dedication to multi-modal AI.

SDK Updates

The release of Python SDK v1.0 streamlines OpenAI development by introducing auto-retry with backoff for improved error resilience and type annotations for better code reliability. It moves away from global defaults, allowing developers to instantiate clients directly for increased control and customization. Additionally, weights and biases functionality is now neatly packaged, simplifying performance tracking and analysis for developers. These enhancements promise a more robust and efficient coding environment.

As OpenAI continues to push the boundaries of what’s possible with AI, it’s clear that the future is now. Whether you’re a developer looking to build cutting-edge apps or a business aiming to leverage AI for growth, these updates offer the tools to bring your visions to life. Stay tuned as these features roll out, marking a new chapter in the era of artificial intelligence.

The original blog by OpenAI can be found here.

doxycycline cap 40mg: doxycycline 100mg best buy – buy doxycycline no prescription

doxycycline 100 mg tablet cost: doxycycline buy usa – doxycycline medication

875 mg amoxicillin cost: order amoxicillin online no prescription – where can i buy amoxicillin over the counter uk

buy cipro no rx: cipro pharmacy – buy cipro online without prescription

buying clomid: where buy cheap clomid – can i order generic clomid without rx

п»їcipro generic: buy cipro cheap – п»їcipro generic

can i get generic clomid: cheap clomid no prescription – can you get generic clomid without insurance

paxlovid pharmacy: paxlovid price – paxlovid buy

ciprofloxacin generic: cipro – buy cipro no rx

cipro: ciprofloxacin generic – cipro online no prescription in the usa

https://indiapharmast.com/# mail order pharmacy india

Wonderful article! We are linking to this particularly great article on our website. Keep up the good writing.

buying prescription drugs in mexico purple pharmacy mexico price list reputable mexican pharmacies online

https://indiapharmast.com/# world pharmacy india

When I originally commented I clicked the -Notify me when new surveys are added- checkbox and already each time a comment is added I purchase four emails with the same comment Will there be any way you may get rid of me from that service? Thanks!

top 10 online pharmacy in india buy medicines online in india top online pharmacy india

http://canadapharmast.com/# thecanadianpharmacy

canadian pharmacy phone number online canadian pharmacy canada drugstore pharmacy rx

https://canadapharmast.com/# ed drugs online from canada

child porn

medicine in mexico pharmacies

https://cmqpharma.online/# medication from mexico pharmacy

mexican border pharmacies shipping to usa

I like this site it’s a master piece! Glad I noticed this on google..

Simply wish to say your article is as astonishing.

The clarity in your post is just excellent and i

can assume you’re an expert on this subject. Well with your permission allow me to grab your RSS feed to keep updated

with forthcoming post. Thanks a million and please

carry on the gratifying work.

That is a very good tip especially to those new to the blogosphere.

Simple but very precise information Many thanks for

sharing this one. A must read post!

I’m truly enjoying the design and layout of your website.

It’s a very easy on the eyes which makes it much more enjoyable for me

to come here and visit more often. Did you hire out a developer to create

your theme? Superb work!

I will right away grab your rss feed as I can’t find your

email subscription link or e-newsletter service. Do you have any?

Kindly let me recognise so that I could subscribe. Thanks.

Attractive portion of content. I simply stumbled

upon your website and in accession capital to assert that I acquire in fact

enjoyed account your weblog posts. Anyway I’ll be subscribing for your

augment or even I fulfillment you get right of entry to consistently quickly.

I always used to study article in news papers but now as I am a user of net

therefore from now I am using net for content, thanks to web.

She plays the role of a secretary, of a lover or of a travel partner which the situation demands you get a companionship which can be cherished for a long time.

Thank you for another informative site. Where else may I get that

kind of info written in such an ideal method?

I’ve a challenge that I’m simply now operating on, and I have been at the look out for such info.

child porn

The word ‘love’ refers to a particular set of behaviour and emotions including commitment, trust, affection, care, passion, intimacy

Outstanding feature

Outstanding feature

I’m always impressed by your ability to write about complex topics in an understandable way.

Modern Talking был немецким дуэтом, сформированным в 1984 году. Он стал одним из самых ярких представителей евродиско и популярен благодаря своему неповторимому звучанию. Лучшие песни включают “You’re My Heart, You’re My Soul”, “Brother Louie”, “Cheri, Cheri Lady” и “Geronimo’s Cadillac”. Их музыка оставила неизгладимый след в истории поп-музыки, захватывая слушателей своими заразительными мелодиями и запоминающимися текстами. Modern Talking продолжает быть популярным и в наши дни, оставаясь одним из символов эпохи диско. Музыка 2024 года слушать онлайн и скачать бесплатно mp3.

You have a knack for writing engaging content. This post kept me hooked till the end. Well done!

Outstanding feature

great article

Fantastic beat I would like to apprentice while you amend your web site how could i subscribe for a blog site The account helped me a acceptable deal I had been a little bit acquainted of this your broadcast offered bright clear concept

This post was a great mix of information and readability. You have a real talent for writing.

Al great deal for a web developer. Details: https://zetds.seychellesyoga.com/var_montero